Cohen’s kappa coefficient is a statistic which measures inter-rater agreement for qualitative (categorical) items. It is generally thought to be a more robust measure than simple percent agreement calculation, since k takes into account the agreement occurring by chance. Cohen’s kappa measures the agreement between two raters who each classify N items into C mutually exclusive categories.

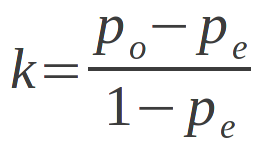

Cohen’s kappa coefficient is defined and given by the following function:

Formula

k=p0−pe1−pe=1−1−po1−pek=p0−pe1−pe=1−1−po1−pe

Where −

· p0p0 = relative observed agreement among raters.

· pepe = the hypothetical probability of chance agreement.

p0p0 and pepe are computed using the observed data to calculate the probabilities of each observer randomly saying each category. If the raters are in complete agreement then kk = 1. If there is no agreement among the raters other than what would be expected by chance (as given by pepe), kk ≤ 0.

Example

Problem Statement:

Suppose that you were analyzing data related to a group of 50 people applying for a grant. Each grant proposal was read by two readers and each reader either said “Yes” or “No” to the proposal. Suppose the disagreement count data were as follows, where A and B are readers, data on the diagonal slanting left shows the count of agreements and the data on the diagonal slanting right, disagreements:

| B | |||

| Yes | No | ||

| A | Yes | 20 | 5 |

| No | 10 | 15 |

Calculate Cohen’s kappa coefficient.

Solution:

Note that there were 20 proposals that were granted by both reader A and reader B and 15 proposals that were rejected by both readers. Thus, the observed proportionate agreement is

p0=20+1550=0.70p0=20+1550=0.70

To calculate pepe (the probability of random agreement) we note that:

· Reader A said “Yes” to 25 applicants and “No” to 25 applicants. Thus reader A said “Yes” 50% of the time.

· Reader B said “Yes” to 30 applicants and “No” to 20 applicants. Thus reader B said “Yes” 60% of the time.

Using formula P(A and B) = P(A) x P(B) where P is probability of event occuring.

The probability that both of them would say “Yes” randomly is 0.50 x 0.60 = 0.30 and the probability that both of them would say “No” is 0.50 x 0.40 = 0.20. Thus the overall probability of random agreement is pepe = 0.3 + 0.2 = 0.5.

So now applying our formula for Cohen’s Kappa we get:

k=p0−pe1−pe=0.70−0.501−0.50=0.40

Comments are closed